Highlights of the Real World Cryptography 2015 workshop, day #3

The 2015 edition of the Real World Cryptography workshop was held in London January 7-9. Here are some personal highlights of day #3 (I have not included a summary of all talks, and do not pay an equal amount of attention to all talks). I have also made reports for day #1 and day #2.

Session 11: TLS Practicalities

"Triple Handshake: Can cryptography, formal methods, and applied security be friends?" by Markulf Kohlweiss (Microsoft Research) and Karthik Bhargavan (INRIA)

Markulf and Karthik gave a funny duo presentation, where Markulf was the crypto theory guy while Karthik was the systems/practice guy.

TLS aims to achieve authenticity and confidentiality. In practice these are not achieved, however, mainly for two reasons. First TLS uses many obsolete cryptographic constructions (e.g. RSA encryption with PKCS#1 v1.5 padding susceptible to Bleichenbacher attack, MAC-then-pad-then encrypt with AES-CBC susceptible to a padding oracle attack, etc.). Solution: stop using them. Second there are many implementation challenges: memory safety, missing checks, certification validation errors, and state machine bugs. Solution: use type safe languages, verify the logical correctness of your code, and link software invariants with cryptographic properties.

Both approaches have been taken in the MiTLS project: a verified TLS implementation, that also allows 'cryptographic agility', i.e. different cipher suites to be used. (This latter thing is the main difference from other formal approaches to a verified TLS implementation.) MiTLS provides a standard scoket API for TLS with embedded security specification. The main security theorem shows that the concrete TLS and ideal TLS are computationally indistinguishable

In TLS you can resume a session and thus prevent doing a complete TLS handshake. The security proof of miTLS also covers this. It even has a security proof for renegotiation, which assumes that the server is honest. This is useful for client authentication that is typically implemented using a renegotiation.

Client authentication over TLS typically uses an inner authentication that blesses the outer unauthenticated channel. This requires that these two protocol layers are tied close together (to prevent man-in-the-middle attacks). The solution is to bind the user authentication to the TLS channel by mixing in a channel identifier in the client authentication sub-protocol. This requires that the man in the middle cannot influence the channel identifier. The question then is what to use for the channel identifier: the master secret, or the full handshake transcript. Problem is that in TLS, a malicious server can act as man in the middle and influence them both to make the channel identifier equal. This is what enables the so-called triple handshake attack.

There are several ways to fix this.

- Disallow server cert changes during negotiation

- USe only well known DH groups and validate DH keys

- no authentication after resumption

The root problem is that the master secret is not bound to the context. If you would create a good session identifier than all problems go away. For example by fixing the standard and define a session hash as the hash of the handshake log, and then compute the master secret as the hash of the premaster secret, the extended master secret and this session hash.

"Crypto at Scale" by Brian Sniffen (Akamai)

Akamai is a content delivery network (CDN), that runs 140.000 web servers as caching web-proxies. They received 1 quadrillion (10^15) request in 2104 of 60 KB each, serving roughly 20% of the web traffic.

For Akamai customers, TLS is a tool for making web requests fail! This may explain their responses when confronted with how Akamai wanted to deal with several SSL attacks that happened in 2014.

Akamai was surprised by the public response to Heartbleed. Customers demanded immediate fixes to the certificates Akamai controlled for them. Yet other customers, that did control their certificates themselves, simply waited until the certificate would expire anyway.

For the Poodle attack, the best response would be: turn of SSL 3 support altogether. Who runs ssl 3 anyway...? Well... There are 100 something customers whose traffic is 100% SSL 3. This is mainly Internet of Things / Machine-to-Machine (M2M) communication. Very few customers have 10%-100% of their traffic being SSL 3 traffic. But from 10% to 0% of SSL 3 traffic, the number of customers linearly increases. And contrary to what you would expect, for customers with very little SSL3 traffic, this traffic is very important to them. This is traffic for cars that need a firmware update, game consoles that need a firmware update, or smart TV's that need an update. I.e. old embedded devices that can only talk SSL 3 and thus critically depend on availability of a server supporting it. Quote: "If you get down to the long tail it gets awfully thorny"

Server Name Indication (SNI) is a hint from the client to the server what identity (i.e. domain name) the server should have. This is useful if you want to host several domains on a single server with a single IP address and you need to know which server certificate to show to the client in the SSL setup phase. The problem is: SNI was not implemented on Windows XP, and also does not exist on Android 2.2 Froyo and below. Froyo is still the standard on cheap phones in the third world. Until april 2014 (when Microsoft pulled the plug on XP), Akamai saw that 20% of requests did not support SNI, going down to 15% after that. Akamai expects that they will have usable IPv6 before SNI gets universally supported ;-)

Lessons learned from all this:

- We need overlapping windows of safety.

- Better a planned change every year than a crisis every 5 years.

- Design for obsolescence.

Session 12: TLS Practicalities, cont.

"Protecting Data in Untrusted Locations - An exercise in real-world threat modelling" by Jan Schaumann (Twitter)

Jan talked about the challenges Twitter faced when it decided to set up a point-of-presence (PoP) in Brazil for the soccer worldcup, that should also terminate the TLS session. In other words, the TLS keys needed to be present there somehow.… (other Twitter PoPs don't terminate TLS).

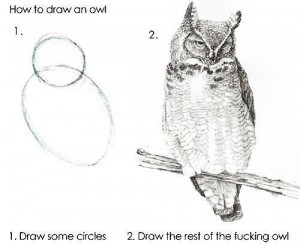

He had funny slides with Roadrunner and Wile E. Coyote (one of my

favourite cartoons back then). And used this image  to illustrate the difference between theory and

practice.

to illustrate the difference between theory and

practice.

They first considered using HSMs, but that would have been too expensive and hard to arrange because of the short schedule. Instead they went for a HSM light approach, using TPM as a trust anchor to boot a server as trusted and then load the image (and keys) remotely.

In essence, the approach is (as always) to minimise the Return on Investment (ROI) for the attacker. In this case by increasing effort (by requiring physical access, the need to attack a running system, and staying unnoticed for a prolonged amount of time) and decreasing value of the TLS keys (using froward secrecy, tightly scoping the certificates, and making them shortlived).

"TLS 1.3" by Eric Rescorla (Mozilla)

Goals for TLS 1.3 are

- Clean up: remove unused and unsafe features.

- Improve privacy: encrypt more of the handshake.

- Improve latency: 1-RTT handshake for naive clients, and 0-RTT handshake for repeat connections.

- continuity: maintain existing important use cases (because you can't design from scratch).

The following things have been removed from TLS 1.3

- Static RSA key exchange. You can still use RSA certificates, but with ECDHE or SHE.

- Compression, because nobody knows how to implement compression safely and generically.

- Non authenticated ciphers, i.e. all ciphers are authenticated encryption in TLS 1.3.

- custom (EC)DHE groups removed. TLS 1.3 only uses predefined groups (to be defined).

- Renegotiation: it was confusing for applications and was a source of vulnerabilities.

Of these, renegotiation needs some extra consideration. Why did we want renegotiation anyway. It is used for connection re-keying (especially for connections that are live for months which is not that uncommon), and it is used for adding client authentication (because this will encrypt the client certificate, which would not be the case if you do client authentication immediately when setting up the initial connection). We need to re-add at least some of this, e.g. client authentication, re-sharing of keys, session resumption (with tickets), and extensions.

In TLS 1.3 the basic idea for the handshake is optimistic keying. The client provides a list of (EC)DHE key shares from expected groups. The server responds with authenticated ECDHE share (if it knows any of the groups selected by the client, otherwise the server corrects. Based on these two messages the client and server can derive the master key straight away. This improves privacy because you can start encrypting straight away. TLS 1.3 uses different keys to encrypt application data and handshake and confirmation messages.

We need backward compatibility (because there will be non 1.3 clients and servers out there). Therefore the client key shares must be embedded into client extensions (to prevent TLS 1.2 servers from choking on this)

Mid-connection client authentication is gone. Everybody is hoping we can hold the line on this one because it makes live so much simpler

First draft expected end Q1, beginning Q2. Finalising will take place in the remainder of 2015. Implementations already planned by NSS, OpenSSL and miTLS.

Session 13: Secure Communications Protocols

"DP5: Privacy-preserving presence protocols" by Ian Goldberg (University of Waterloo)

Messaging apps like xmpp allow users to see who of their friends are online. In xmpp the server knows the set of all users, and the friend graph (i.e. which users are friends). Whenever you sign in to the xmpp server, the server starts monitoring your status. It will also forward status information about your friends to the app.

The problem is that the friend graph is important meta data, which can impose a significant privacy (or even security) risk. It would therefore be nice if we could inform users about the status for their friends without the server learning this (and not learning the friend graph).

Such a privacy preserving presence protocol should allow friend registration, presence registration, presence status query and friend suspension/revocation. If we assume secure end nodes (and that the crypto cannot be broken), the protocol should protect against a global passive adversary, dishonest users and a threshold of honest infrastructure servers. It should protect the privacy and integrity of presence (and any auxiliary data), privacy of the friend graph, and achieve unlinkability: requests for status information cannot be linked to previous request from the same user. Also, if Alice suspends or revokes friendship with Bob, this should be indistinguishable (for Bob) from Alice suddenly being offline forever.

DP5, the Dagstuhl Privacy Protecting Presence Procotol P - the P is for extra privacy, achieves this.

The main idea is as follows. Friends like Alice and Bob somehow have pairwise shared keys Kab. When Alice logs in he uploads his status and auxiliary information to the DP5 server encrypted using this key. Alice queries the DP5 server for friends using a private information retrieval (PIR) protocols. There are quite efficient PIR systems these days, like Percy++ (C++).

The strawman version (not the actual version) works as follows. Time is divided into epochs ti. When Alice comes online at such an epoch, then for every friend (users keep their own list of friends locally) Alice generates a key K and identifier ID using PRFKab(ti) (where PRF is a pseudo-random function). It then encrypts the status and auxiliary information using authenticated encryption under key K, and uploads the resulting ciphertext C together with ID as a tuple (ID,C) to the DP5 server.

To check the status of his friends at epoch ti it computes PRFKab(ti) for each of his friends, and queries the server for a tuple ID using PIR. If it exists, Bob decrypts the corresponding ciphertext C with K.

Because epochs are short (at most a few minutes long), the number of IDs is large (because you have separate IDs for each friend and each epoch). This is bad for PIR whose complexity depends on the size of the database.

We use David Wheeler's famous quote: "any problem in computer science can be solved by another layer of indirection (and leads to other problems)".

The idea of the actual DP5 protocol is to use two timescales: one long (where each epoch Ti is a day long) and one short (where each epoch ti is a minute long). The long timescale determines how long it takes (at most) for a revocation to take effect. The short time scale determines how long it takes before a status change is passed on.

We now run two instances of the above strawman protocol. The first (for the long time scale Ti) stores a presence key P for Alice encrypted using authenticated encryption against a key K derived from PRF_K_ab(Ti) for each of Alice's friends.

The second instance (for short time scale ti) uses PRFP(ti) to derive K and ID (instead of using Kab). Alice needs to do this only once now (instead of doing this for all her friends).

They have implemented the code for the core of DP5 in C++.

"Innovation in end-to-end encrypted communication tools" by Joseph Bonneau (Princeton)

Especially the second part of the talk about research challenges in secure communication protocols was interesting. These are the challenges Joseph mentioned.

- How to secure endpoint platforms?

- Secure software distribution & update.

- How to establish peer trust? How to implement key verification in a way that actually it works for people? There is no hard data about what works. (If we approach this at a slightly higher level of abstraction: how can people be sure they are talking to whomever they mean to talk to.) Create transparency logs (like certificate transparency): that log all keys key servers distributed so if the change keys there is a public record for this. Interesting alternative approaches in this space are keybase.io and OneName., that offload the web of trust to social media.

- How to recover from lost keys?

- Support for multiple devices (to access the same resource, e.g. cloud storage). There are different models for how this could work (depends on what people want, and we don't really know): e.g. 1 key shared with all devices, independent keys per device, different keys signed by one master device, or different keys cross signed by all.

- How to implement forward secrecy in an asynchronous settings, e.g. ratcheting with Axolotl?

- Group messaging has several interesting problems, like how to ensure consistency of the communication among all parties (cf the ice cream problem).

- Anonymous messaging transport.

- How to prevent spam and abuse? Content filtering is hard on encrypted content.

If you want to stay informed about news in this area, subscribe to messaging@moderncrypto.org.

Session 14: Short Talks 3

"Lessons Learned from Implementing Privacy-Preserving Protocols for Smart Meters" by Benessa Defend and Klaus Kursawe (ENCS)

Main takeaway: Lego is great to explain one-way functions and homomorphic one-way functions ;-) Really!

"Lightweight Authentication Protocols on Ultra-Constrained RFIDs - Myths and Facts" by Matthias Hamann, Frederik Armknecht and Vasily Mikhalev (Universitat Mannheim)

The specific hardware capabilities of low cost RFID tags are often hardly known to academic community. Based on some academic literature and particularly interviews with industry experts, they compiled the following hardware constraints for RFID tags that cost $0.05-$0.10 (this includes the so called EPC tags).

- Area: at most 2.000 gate equivalent (GE). For comparison purposes, AES requires 3400 GE, PRESENT requires 1100 GE.

- Non-volatile memory: at most 2048 bits.

- Power: at most 10 microwatt.

- Clock speed: at most 100 kHZ (which translates to 15k clock cycles per authentication).

- Transmission bandwidth: 200kbit/s.

- RNG: at most 128 (truly) random bits per authentication

With these constraints, they then checked to see which type of authentication protocols can indeed be implemented on such tags. They found that a simple challenge response protocol using alight weight cipher can easily be implemented. LPN based protocols, like HB+ (that have specifically been designed to be implementable on such tags) are not implementable on such tags by far: they need 225k random bits per authentication, and need to transmit 261k bits from prover to verifier.

Conclusions

I skipped the last three talks because I had to catch a plane back home. Like last year, RWC was a really good workshop with loads of interesting presentations. See you guys next year, perhaps.. ;-)

[…] The 2015 edition of the Real World Cryptography workshop was held in London January 7-9. Here are some personal highlights of day #1 (I have not included a summary of all talks, and do not pay an equal amount of attention to all talks). I have also made reports for day #2 and day #3. […]

[…] my summary of the same talk at Real World Cryptography workshop (Session […]

[…] other stuff « Highlights of the Real World Cryptography 2015 workshop, day #1 Highlights of the Real World Cryptography 2015 workshop, day #3 […]